Ford CEO Explains Why It’s Hard to Build Self-Driving Cars

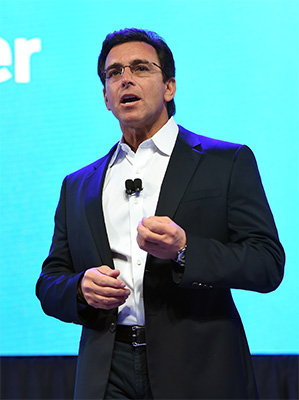

Ford didn’t announce a deal with Google to develop self-driving cars at CES this week, as some had expected. But the automaker did reiterate its commitment to developing autonomous vehicles, as CEO Mark Fields announced a new version of its self-driving test car and said the company will ramp up its fleet from 10 to 30 of them this year, letting the company gather more information faster in hopes of eventually getting to a point where it can sell such a car to consumers.

Fields sat down with MIT Technology Review to discuss the challenges Ford is facing in building autonomous cars, how we’re likely to start using such vehicles, and the kinds of social cues we’ll need to program for them to work in real-life driving situations. And as for that Google deal, Fields declined to comment, saying only that the company talks privately with many companies.

Ford has been quieter than many other carmakers regarding automated driving. Why have you been more cautious about the technology?

We take autonomous driving very, very seriously. And we want to make sure that when we talk about something that we have a lot of experience under our belts to inform and to allow us to speak intelligently around what our plans are going forward. But obviously we’re here at CES this year to, at the highest level, really send the message that we are transforming the company from just an auto company to an auto and mobility company, and thinking about it in this more holistic way, through what we call Ford Smart Mobility, and one of the elements is autonomous driving. And that’s why we announced today our even bigger commitment in tripling the [self-driving test] fleet.

What are some of the biggest lessons you’ve learned from the previous driverless car models that were incorporated into the new one?

First off, that there are a lot of different variables. When you originally think you have all the bases covered, you realize there are probably multitudes more that you need to cover.

Weather conditions, for example: when you look at some of the capabilities of the cameras and the sonars and the sensors, some have difficulty operating, for example, in freezing rain or snow or inclement conditions, and we’ve had to work through some of those things. And we’re still working through them.

Yeah, some technologies key to autonomous-driving—such as lidar laser scanners—seem to have difficulties in bad weather. How are you trying to solve this?

The way we’re overcoming it is not only are we using lidar sensors but we’re using cameras and sonar, etc., to be able to compensate for, let’s say, inclement weather. So you have—I won’t say redundant systems, but you have systems that will give you a complete picture of things.

Will these problems be solvable in the near future?

I think they’ll be solvable. It’s going to take time. We’ve said that we haven’t put a time frame on when we would be introducing a fully autonomous vehicle, but we’ve said when we do we want to make sure it works, it’s safe, and also it’s accessible to everyone, and not just folks that can afford luxury cars.

Beyond the few features we’ve seen thus far that bring a measure of autonomy to cars, how do you expect autonomous driving to roll out to the general public, both from Ford and from others?

I think first off we would see fully autonomous vehicles launching in defined areas that have been 3-D mapped. And potentially launched with a service—transportation as a service—in mind first. Passengers, a passenger kind of ride-sharing service, those are some of the things we’re thinking about right now.

Will there eventually be different degrees of autonomy for different kinds of drivers and situations?

I think we’ll have varying degrees. I mean, our strategy is twofold. One is to continue to offer great semi-autonomous driving features. I mean, features we have in our vehicles today where we lead in a lot of different segments, whether it’s vehicles that keep you in your lane or help you parallel or perpendicular park automatically, automatically brake, those kind of things. We’re also, at the same time, having a dedicated team working toward delivering a fully autonomous vehicle, but in a defined area. Our view is longer term that there will be fully autonomous vehicles where the driver doesn’t have to be involved, but in the near term obviously it’s going to be more semi-autonomous features.

Autonomous vehicles so far refuse to break the law—even when that may be the safest thing to do, like to avoid an accident. How do we add that aspect of intelligence to driverless cars?

We’re going to have to work at it. Let’s use the example of when an autonomous vehicle comes to a crosswalk, and a lot of times today when you’re trying to cross the street, if there’s no light, if you’re the pedestrian, you want to look to make sure the vehicle isn’t creeping forward. You want to make eye contact. So I think we have to think through that.

Or let’s say you’re at a four-way stop. The rule is whoever gets there first and stops can go. But as you know, people don’t always follow the law. So one of the things is how would we program into the vehicle that when you come to a four-way stop that you can actually start creeping forward a bit to signal to the other cars that you’re going through. I think this is part of the development process we have in front of us.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.