This Climate Policy Could Save the Planet

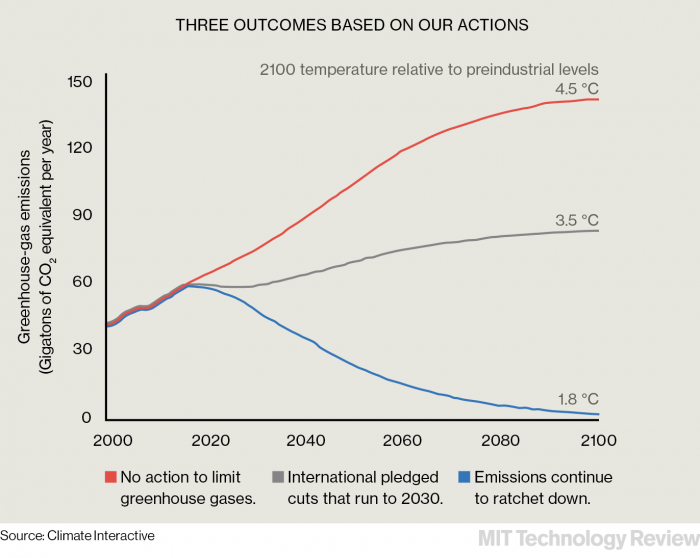

International climate-change negotiators are focused on keeping global warming at or below 2 °C above historical levels—the limit beyond which the U.N.’s Intergovernmental Panel on Climate Change says the consequences of global warming will become catastrophic. But even though negotiators may have finally made some progress on agreements to reduce emissions, there is a big problem: we’re already about halfway to the 2 °C threshold. In October, for example, the warmest October in 135 years of record-keeping, the global average temperature was 1.04 °C warmer than the preindustrial reading. It was no aberration: 2015 is almost certain to have been the warmest year on record, surpassing the previous record set in 2014.

Even as flocks of jets began descending upon Paris for the latest talks, the delegates could see that the consequences of global warming had been setting in fast. Ice sheets in Greenland and Antarctica are shrinking with unexpected speed, Arctic sea ice is disappearing faster than forecast in computer models, and circulation patterns over vast swaths of the planet’s oceans are being disrupted. “The more we learn, the more we see that these processes are happening more quickly than we anticipated,” says Noah Diffenbaugh, a professor of earth system science at Stanford.

These trends highlight the uncertainty of climate models and the somewhat arbitrary nature of the threshold set by the U.N. panel: the fact is that no one really knows how high the global average temperature will get once the accumulated carbon in the atmosphere stays above 400 parts per million (a level it reached, on a monthly average basis, for the first time last March)—nor what the consequences for humanity will be in a world that is 2 °C hotter than it was in the preindustrial era. And even if we do accept the goal of keeping warming below 2 °C, we still don’t know what will have to happen to carbon dioxide emissions to make that possible. According to a 2014 report from the U.N. Environment Program, the total maximum amount of additional carbon that can be emitted without raising the average temperature by more than 2 °C is about 1.1 trillion metric tons. (In 2014 the world produced 35.9 billion metric tons of carbon.) But that is only an estimate.

So how do you formulate international climate policy given the scientific uncertainties? A number of experts are calling for a self-adjusting policy mechanism that establishes a simple formula for progressive emissions cuts based on empirical data, rather than limits set years in advance. By responding to what’s already happened, rather than what scientists conclude is likely to happen, such a system would, at least in theory, sidestep the uncertainty of climate forecasts.

A flexible, self-correcting system for emissions cuts would be “anti-fragile”: it would get stronger in the face of uncertainty.

This new approach took form in an August 2015 paper published in Nature Climate Change by a group of researchers headed by Myles Allen, a professor of geosystem science at the University of Oxford, and Friederike Otto, a lecturer in physical geography at Oxford and a research fellow at the Environmental Change Institute. The paper, titled “Embracing Uncertainty in Climate Change Policy,” argued that a flexible, self-correcting system would be “anti-fragile,” in that “uncertainty and changes in scientific knowledge make the policy more successful by allowing for trial and error at low societal costs.”

Think of the U.S. Federal Reserve Bank. The Fed doesn’t set interest rates far into the future by gazing at computer models of the economy and predicting GDP growth and inflation two decades out; it monitors key indicators, reviews its positions, and adjusts interest rates accordingly. It has succeeded remarkably well at keeping inflation in check even as parts of the economy—such as the mortgage lending sector—periodically blow up.

That approach contrasts with what’s known as the precautionary principle—the doctrine that policy makers should base their decisions on avoiding the worst-case scenario. Precautionary models developed by economists including Martin Weitzman, of Harvard, dictate that even if the risks of climate catastrophe are small, its effects would be so radical that it should be avoided at almost any cost. The problem with such an approach is that it requires politicians to marshal tremendous resources and take aggressive actions—such as drastically limiting carbon emissions in poor countries like India—that may be unrealistic and even harmful.

The system Allen and Otto propose would respond directly to the amount of measurable warming attributable to human activity. The scheme has a straightforward prescription: the world must reduce emissions by 10 percent for every one-tenth of one degree of warming (beyond the 1 °C mark we have already effectively reached). As temperatures near 2 °C of warming, emissions ratchet progressively downward, eventually to near zero. The system responds to uncertain outcomes with a built-in self-adjusting mechanism.

There are advantages to this system, and some pitfalls. Among the benefits is that it gives policy makers and governments political cover: whether the observed warming is less than or greater than predicted, the system responds automatically to the signals. The formula for those adjustments is agreed to in advance. “If you’re waiting for science to provide exact certainty about regional local change over a multi-decade period, you’re going to be waiting forever,” says Diffenbaugh.

What the Allen-Otto system doesn’t do is account for the uncertain effects of specific amounts of warming—it assumes nothing about sea-level rise, the frequency of extreme weather events, or other unpredictable effects of a warmer planet. “We are framing this system on the premise that the world has agreed to avoid more than 2° of warming, independent of the effects,” says Allen. “It would be hard to go further than that in an international system, because you would need to get agreement on the degree of unacceptability of impacts.”

The larger problem, of course, will be putting such a system in place. Getting politicians to commit to voluntary emissions limits decades in advance has taken many climate summits, over many years. Getting them to agree to a system that enforces bigger and bigger cuts based on a formula is a challenge of a different order. But as evidence of the consequences of climate change accumulates in unpredictable ways, this system may be the best way to make the cuts we need.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.