How Brain Scientists Outsmart Their Lab Mice

Scientists can now observe the brains of lab animals in microscopic detail as the animals go about some action. A technique called two-photon imaging, in particular, allows neuroscientists to watch thousands of neurons working in concert to encode information.

The trouble is, two-photon imaging requires the animal’s head to stay fixed in place. That would seem to preclude watching the brain as the animal does anything of much interest.

One creative solution is virtual reality—a computer-generated environment experienced through a headset. A few years ago neuroscientists started designing tiny virtual-reality systems to fool mice into thinking they were navigating a maze when they were really running on the top of a large ball, their heads fixed in position.

Until now, however, mice didn’t run on the ball until they’d had weeks of training. Nicholas Sofroniew, working with others at the HHMI Janelia Campus in Virginia, created a tactile virtual maze the mice seem to understand right away: they navigate through virtual corridors without training. More recently, he has been working with Jeremy Freeman to expand the complexity of the system.

It’s designed to exploit the way mice navigate in nature, Freeman says. Instead of relying primarily on their eyes, mice rely heavily on their whiskers to feel their way through the world.

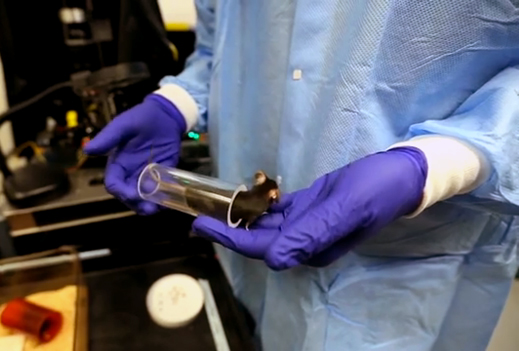

In the whisker-oriented virtual reality, the walls move to give the mouse the illusion that it is running down winding corridors, he says. But the whole time, the rodent’s head is stationary.

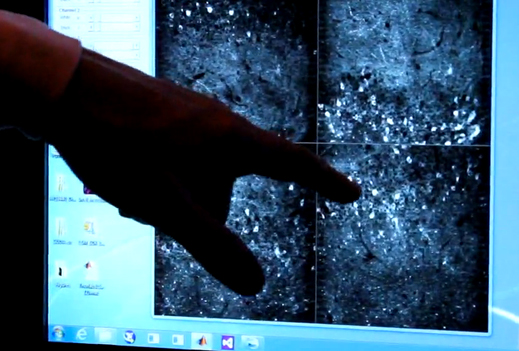

Karel Svoboda, a senior researcher on the project, says they’ve already learned that different neurons fire depending on the distance between the mouse’s head and the wall. The brain seems to be translating input from the whiskers into a form the mouse can use.

The imaging technique, which Svoboda helped develop, relies on fluorescent proteins from jellyfish. The researchers genetically alter the mice so their cells make this fluorescent protein in a form that’s activated when exposed to calcium ions. Neurons communicate by transferring calcium ions, so the tagged neurons light up in concert with brain activity. To see and record what’s going on, the researchers replace a chunk of the animals’ skulls with a little window.

Scientists have long been able to “listen” to single neurons using electrodes, says Svoboda, but that’s like being able to hear only one instrument during a symphony. Now, he says, they can watch the way information flows through the brain while the mouse is learning to cope with a new, albeit virtual, environment.

Even though the mouse’s head doesn’t move, it’s engaged in what Svoboda calls active sensation. We do it when we move our eyes around to explore our surroundings. Mice do that as well, and they also move their whiskers around to explore by feel. The mouse brain seems to use sets of neurons to represent distances, he says.

Ultimately, the researchers hope to understand how the brain computes information. That could help uncover what happens in disorders such as autism. “We want to understand how brains do everything involved in sensing, learning, and decision-making,” says Freeman.

What they’d really like is to understand the mechanism of learning and to get at the nature of intelligence. That’s a hard problem, he says, “but trying to understand the brain while exploring immersive environments is one of our best shots.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.