Augmented-Reality Glasses Could Help Legally Blind Navigate

Though it looks like a pop-art installation you might find in a Brooklyn gallery, the cartoonish, high-contrast black-and-white world you see when looking through a pair of Stephen Hicks’s augmented-reality glasses is actually part of a plan to help the legally blind see better.

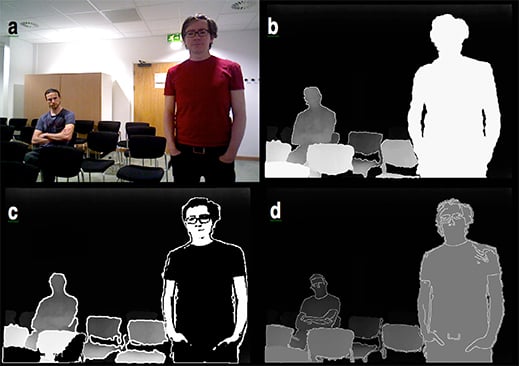

Hicks, a neuroscience and visual prosthetics research fellow at the University of Oxford, is the cofounder of VA-ST, a startup that emerged from the university that’s building glasses that use a depth sensor and software to highlight the outlines of nearby people and objects and simplify their features. The glasses have four different modes that show the world around you in black, white, and gray with varying degrees of detail and cartoonishness, as well as a regular color mode that can be used to simply zoom in on or pause objects.

Most people who are classified as legally blind actually retain some vision, but may not be able to pick out faces and obstacles, particularly in low light. Because of this, Hicks believes that his device, called Smart Specs, could make it easier for some people with sight impairment to explore their surroundings.

“This helps identify nearby things and really make them stand out,” he says.

In June, VA-ST began a study in the U.K. through which it’s loaning out prototypes of its glasses and an attached controller box to 300 people with eye conditions like macular degeneration, glaucoma, and retinitis pigmentosa for four weeks at a time. Since the box can record which settings study participants use and when, along with motion data from a built-in accelerometer, researchers will be able to see how people with different vision problems use the glasses in daily life.

VA-ST hopes to begin selling a version of its setup early next year, Hicks says, for under $1,000.

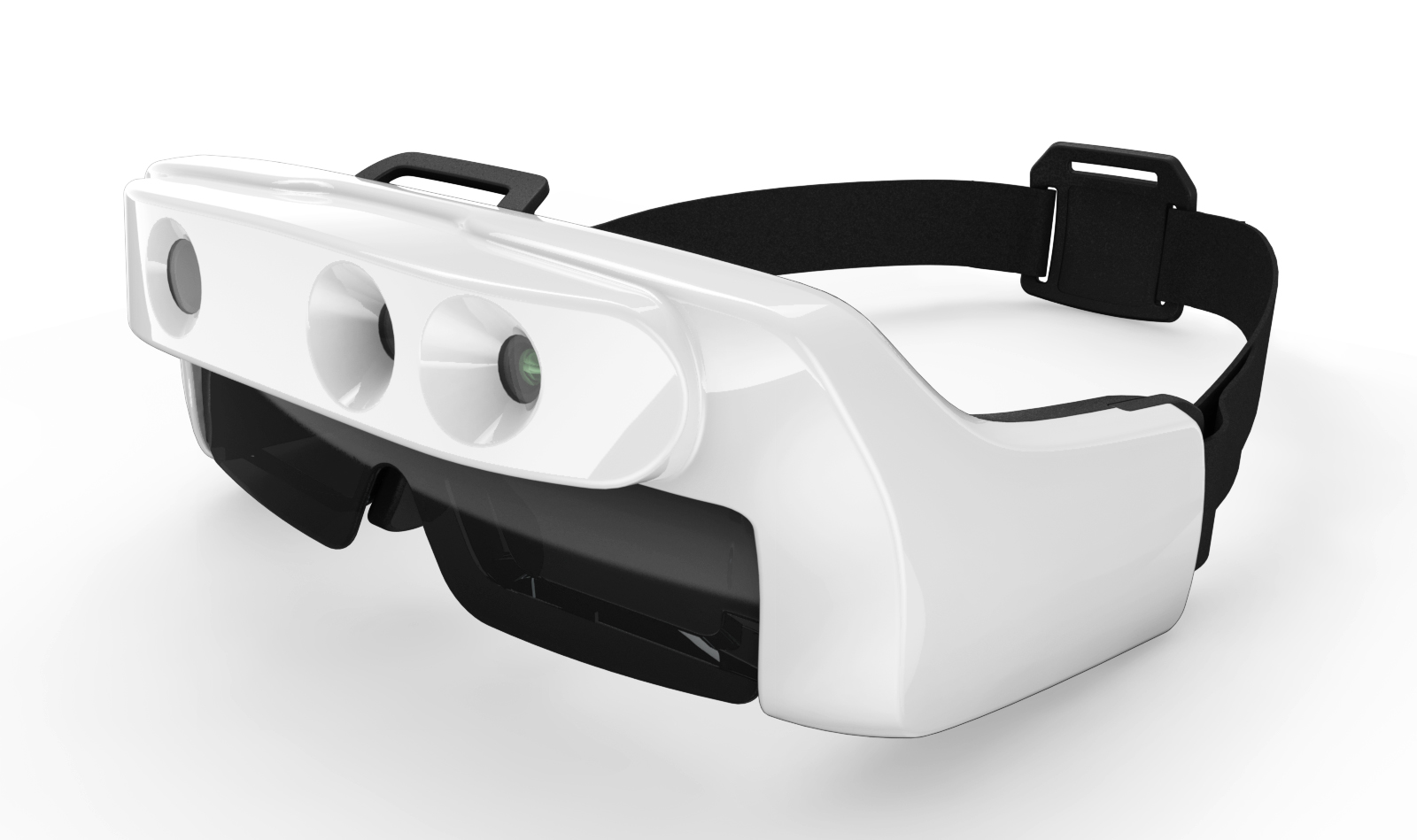

The current version of the Smart Specs consists of a bulky, shiny plastic headset that includes a pair of Epson Moverio augmented-reality glasses as its display, along with an Asus gadget that combines a depth camera and a regular color camera. The chunky eyewear straps around the wearer’s head, and connects to a slim box with several knobs on its side that users have to carry around, too—the box contains an Android-running computer that runs the image processing for the glasses, along with a battery pack that powers the system for up to eight hours at a time. It’s impossible to ignore, but smaller than the previous prototype, which tethered the glasses to a laptop computer.

The regular camera in the Asus device captures your surroundings, while the depth camera determines the distance to each object and surface. Software running on the computer in the control box uses the depth measurements to figure out what to highlight and what to ignore. If a woman stands about 10 feet in front of you, VA-ST’s software will give her body an animated, exaggerated look according to the setting you’re using—making her appear black and white with a white outline and just a few major facial features like glasses, her nose, and mouth, for instance—while other people and objects that are a bit farther out will appear gray, and the background will look completely black.

“Walking into a restaurant or a bar, the lighting changes so dramatically that no one can see at all,” Hicks says. “This would help highlight chairs.”

I tried out Smart Specs during a technology conference in Santa Clara, California, this month. As a person with normal vision, viewing the world through VA-ST’s lenses is like being inside a minimalist animated film; Hicks compares it to Richard Linklater’s trippy animated film Waking Life, which employs a form of drawing atop live-action film footage known as rotoscoping.

Staring at Hicks, I saw him in black and white, with simple features and the plaid of his shirt reduced to a simple criss-cross pattern. Other people standing in the distance appeared as black figures with white outlines.

Hicks says one of the biggest challenges now is getting long-range depth cameras that can work well with his glasses, which require a range of about 15 feet.

James Weiland, a professor of ophthalmology and biomedical engineering at the University of Southern California who is working on a head-worn wearable visual aid, says Smart Specs look potentially useful to people with low vision. He points out, however, that there may be important visual information in the background that VA-ST’s technology wouldn’t show unless you’re close to it, such as doors.

Smart Specs may also need to get a lot more lightweight and, perhaps, better looking.

“Some will accept it because of the benefit it provides,” Weiland says. “But some will say, ‘I don’t like to look like I have a big thing on my head.’ ”

Hicks agrees; he says he’d like to shrink them down “to make them look much more normal.”

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.