Watch Your Language

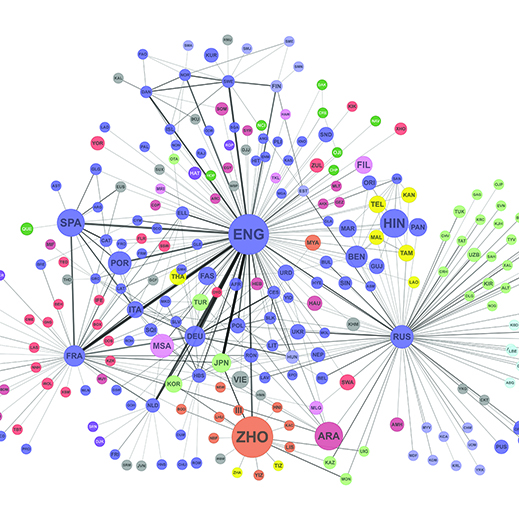

By analyzing data on book translations and multilingual Twitter users and Wikipedia editors, researchers at MIT, Harvard, Northeastern, and Aix Marseille University have developed network maps that they say represent the strength of the cultural connections between speakers of different languages.

In a paper in the Proceedings of the National Academy of Sciences, they showed that a language’s centrality in their network better predicts the global fame of its speakers than either the population or the wealth of the countries in which it is spoken.

“The global social network is structured through these circuitous paths in which people in some language groups are by definition way more central than others,” says Cesar Hidalgo, assistant professor of media arts and sciences and senior author on the paper. “That gives them a disproportionate power and responsibility.”

In two of the network maps, the strength of the connections between any two languages depended on the number of Twitter users or Wikipedia editors who had demonstrated facility in both of them. The third map was based on UNESCO’s Index Translationum, which catalogues 2.2 million book translations in more than 1,000 languages.

To measure global fame, the researchers identified people with Wikipedia entries in at least 26 languages. They also looked at the 4,002 people profiled in the American political scientist Charles Murray’s book Human Accomplishment. In both cases, at least one of the language centrality networks provided a better correlation to fame than the number of speakers of a language and the GDPs of the countries in which it is spoken.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.