Linguistic Mapping Reveals How Word Meanings Sometimes Change Overnight

In October 2012, Hurricane Sandy approached the eastern coast of the United States. At the same time, the English language was undergoing a small earthquake of its own. Just months before, the word “sandy” was an adjective meaning “covered in or consisting mostly of sand” or “having light yellowish brown color.” Almost overnight, this word gained an additional meaning as a proper noun for one of the costliest storms in U.S. history.

A similar change occurred to the word “mouse” in the early 1970s when it gained the new meaning of “computer input device.” In the 1980s, the word “apple” became a proper noun synonymous with the computer company. And later, the word “windows” followed a similar course after the release of the Microsoft operating system.

All this serves to show how language constantly evolves, often slowly but at other times almost overnight. Keeping track of these new senses and meanings has always been hard. But not anymore.

Today, Vivek Kulkarni at Stony Brook University in New York and a few pals show how they have tracked these linguistic changes by mining the corpus of words stored in databases such as Google Books, movie reviews from Amazon, and of course the microblogging site Twitter.

These guys have developed three ways to spot changes in the language. The first is a simple count of how often words are used, using tools such as Google Trends. For example, in October 2012, the frequency of the words “Sandy” and “hurricane” both spiked in the runup to the storm. However, only one of these words changed its meaning, something that a frequency count cannot spot.

So Kulkarni and co have a second method in which they label all of the words in the databases according to their parts of speech, whether a noun, a proper noun, a verb, an adjective and so on. This clearly reveals a change in the way the word “Sandy” was used, from adjective to proper noun, while also showing that the word “hurricane” had not changed.

The parts of speech technique is useful but not infallible. It cannot pick up the change in meaning of the word mouse, both of which are nouns. So the team have a third approach.

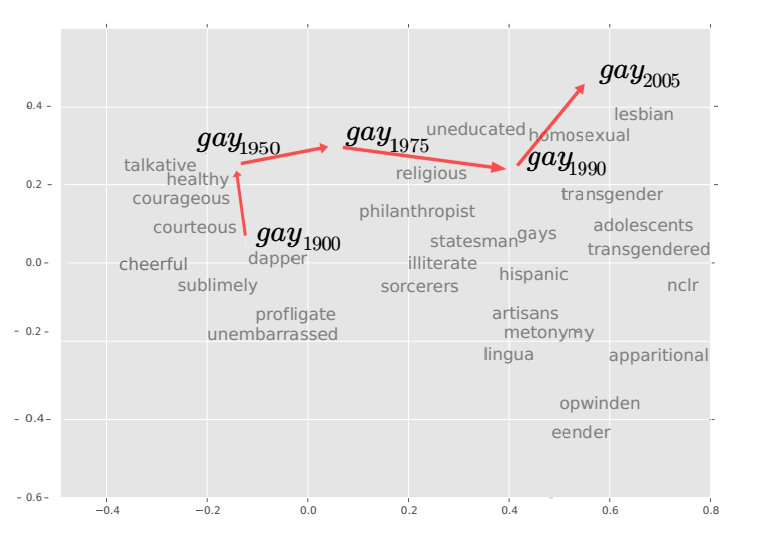

This maps the linguistic vector space in which words are embedded. The idea is that words in this space are close to other words that appear in similar contexts. For example, the word “big” is close to words such as “large,” “huge,” “enormous,” and so on.

By examining the linguistic space at different points in history, it is possible to see how meanings have changed. For example, in the 1950s, the word “gay” was close to words such as “cheerful” and “dapper.” Today, however, it has moved significantly to be closer to words such as “lesbian,” homosexual,” and so on.

Kulkarni and co examine three different databases to see how words have changed: the set of five-word sequences that appear in the Google Books corpus, Amazon movie reviews since 2000, and messages posted on Twitter between September 2011 and October 2013.

Their results reveal not only which words have changed in meaning, but when the change occurred and how quickly. For example, before the 1970s, the word “tape” was used almost exclusively to describe adhesive tape but then gained an additional meaning of “cassette tape.”

Prior to the 1950s, the word “plastic” generally meant flexible but the introduction of polystyrene popularized its use as a synthetic polymer. And before the 1970s, the word “diet” meant the food consumed by an individual. But after the publication in 1972 of Dr. Atkins’ Diet Revolution, it took on the additional meaning of a lifestyle of food consumption.

Kulkarni and co have observed similar, more recent trends on Twitter and Amazon with changes in the usages of words such as candy, streaming, and shades that correspond to the popularity of Candy Crush Saga game, the phenomenon of video streaming and the publication of Fifty Shades of Grey in June 2012.

That’s a fascinating insight into the evolution of language that shows how our language is changing at every scale and on a wide range of media. “This effect is especially prevalent on the Internet, where the rapid exchange of ideas can change a word’s meaning overnight,” say Kulkarni and co.

That could be useful for building language processing machines better able to understand the current lingo. But perhaps most useful of all is that it provides a record of the way language has changed and a mechanism for observing current changes more or less in real time.

Ref: arxiv.org/abs/1411.3315 : Statistically Significant Detection of Linguistic Change

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.