The Right Way to Fix the Internet

If you’re like most people, your monthly smartphone bill is steep enough to make you shudder. As consumers’ appetite for connectivity keeps growing, the price of wireless service in the United States tops $130 a month in many households.

Two years ago Mung Chiang, a professor of electrical engineering at Princeton, believed he could give customers more control. One simple adjustment would clear the way for lots of mobile-phone users to get as much data as they already did, and in some cases even more, on cheaper terms. Carriers could win, too, by nudging customers to reduce peak-period traffic, making some costly network upgrades unnecessary. “We thought we could increase the benefits for everyone,” Chiang recalls.

Chiang’s plan called for the wireless industry to offer its customers the same types of variable pricing that have brought new efficiencies to transportation and utilities. Rates increase during peak periods, when congestion is at its worst; they decrease during slack periods. In the pre-smartphone era, it would have been impossible to advise users ahead of time about a zig or zag in their connectivity charges. Now, it would be straightforward to vary the price of online access depending on congestion and build an app that let bargain hunters shift their activities to cheaper periods, even on a minute-by-minute basis. When prices were high, consumers could put off non-urgent tasks like downloading Facebook posts to read later. Careful users could save a lot of money.

Excited about the prospects, Chiang patented his key concepts. He formed a company, now known as DataMi, to build the necessary software. Venture capitalists and angel investors put $6 million into the company. A seasoned wireless executive, Harjot Saluja, signed on to be the chief executive, while prominent people such as Reed Hundt, a former chairman of the Federal Communications Commission, joined DataMi’s advisory board. Everything seemed aligned for Chiang and Saluja as they set out to make “smart data pricing” a reality.

Today, DataMi’s variable pricing idea is on ice. The startup has regrouped in favor of other services, including one that helps businesses calculate how much of their employees’ cell-phone bills should be reimbursed because of work-related usage. The reasons for the switch have little with DataMi’s technical ability to make good on the promise of variable pricing. In early user tests, it delivered everything that DataMi’s patents predicted.

But politics got in the way.

A huge debate has erupted about the degree to which Internet carriers should be subject to a concept known as net neutrality. In its simplest form, the idea is that Internet service providers such as AT&T, Comcast, and Verizon shouldn’t offer preferential treatment to certain types of content. Instead, they should send everything to their customers with their “best efforts”—as fast as they can manage. Nobody can pay your ISP for a “fast lane” to your house. Carriers can’t show favoritism toward any of their own services or applications. And nobody providing lawful content can be slowed or blocked.

At this point, net neutrality is only a principle and not a law. Though the FCC put an ambiguously worded version on the books in 2010, it was struck down this year by a federal district court. But now, as the FCC is deliberating how to redo the policy, it’s facing passionate demands to restore and possibly even tighten the rules, giving ISPs even less leeway to engage in what regulators have typically called “reasonable network management.”

Until about a year ago, Chiang and his colleagues thought their data-pricing idea had so much common-sense appeal that no one would regard it as an assault on net neutrality—even though it would let carriers charge people more for constant access. But then, as the debate heated up, everything got trickier. Ardent defenders of net neutrality began painting ever darker pictures of how the Internet could suffer if anyone treated anyone’s traffic differently. Even though Chiang and Saluja saw variable pricing as pro-consumer, they had no lobbyists or legal team and decided they couldn’t afford a drawn-out battle to establish that they weren’t on the wrong side.

For network engineers, DataMi’s about-face isn’t an isolated example. They fear that overly strict net neutrality rules could limit their ability to reconfigure the Internet so it can handle rapidly growing traffic loads.

Dipankar Raychaudhuri, who studies telecom issues as a professor of electrical and computer engineering at Rutgers University, points out that the Internet never has been entirely neutral. Wireless networks, for example, have been built for many years with features that help identify users whose weak connections are impairing the network with slow traffic and incessant requests for dropped packets to be resent. Carriers’ technology assures that such users’ access is rapidly constrained, so that one person’s bad connection doesn’t create a traffic jam for everyone. In such situations, strict adherence to net neutrality goes by the wayside: one user’s experience is degraded so that hundreds of others don’t suffer. As Raychaudhuri sees it, the Internet has been able to progress because net neutrality has been treated as one of many objectives that can be balanced against one another. If net neutrality becomes completely inviolable, it’s a different story. Inventors’ hands are tied. Other types of progress become harder.

Rather than debate such subtleties, net neutrality’s loudest boosters have been staging a series of simplistic—but highly entertaining—skits in an effort to rally the public to their side. In September, popular websites such as Reddit and Kickstarter simulated page-loading debacles as a way of getting visitors to believe that if net neutrality isn’t enacted, the Internet could slow to a crawl. That argument has been picked up by TV comedians such as Jimmy Kimmel, who showed a track meet in which the best sprinters represented cable companies with their own fast lanes. A stumbling buffoon in his underwear portrayed the shabby delivery standards that everyone else would endure.

Even President Barack Obama has been publicly reminding regulators of his commitment to net neutrality. In August he declared, “You don’t want to start getting a differentiation in how accessible the Internet is to different users. You want to leave it open so the next Google and the next Facebook can succeed.”

Clearly, most Americans aren’t happy with their Internet service. It costs more to get online in the United States than just about anywhere else in the developed world, according to a 2013 survey by the New America Foundation. In fact, U.S. service is sometimes twice as expensive as what’s available in Europe—and slower, too. Meanwhile, the University of Michigan found in a recent public survey that U.S. Internet service providers rank dead last in customer satisfaction scores against 42 other industries. Specific failings range from unreliable service to dismal call-center performance.

With lots of U.S. consumers wanting the government to do something about Internet service, strengthening net neutrality feels like a way to do it. Given that most Internet providers are urging the FCC to let this principle disappear from the books, it’s natural to call for the opposite approach. Yet that would probably be the wrong move. It’s possible to overdose on something even as benign-sounding as neutrality.

Bitstreams

The two sides in the net neutrality debate sometimes seem to speak two different languages, rooted in two different ways of seeing the Internet. Their contrasting perspectives reflect the fact that the Internet arose in an ad hoc fashion; there is no Internet constitution to cite.

Nonetheless, many legal scholars like to point to their equivalent of the Federalist Papers: a 1981 article by computer scientists Jerome Saltzer, David Reed, and David Clark. The authors’ ambitions for that paper (“End-to-End Arguments in System Design”) had been modest: to lay out technical reasons why tasks such as error correction should be performed at the edges, or end points, of the network—where the users are—rather than at the core. In other words, ISPs should operate “dumb pipes” that merely pass traffic along. This paper took on a remarkable second life as the Internet grew. In his 2000 book Code, a discussion of how to regulate the Internet, Harvard law professor Lawrence Lessig said the lack of centralized control embodied in the 1981 end-to-end principle was “one of the most important reasons that the Internet produced the innovation and growth that it has enjoyed.”

The Internet has progressed because net neutrality has been one of many objectives that can be balanced against one another. If neutrality becomes completely inviolable, it’s a different story.

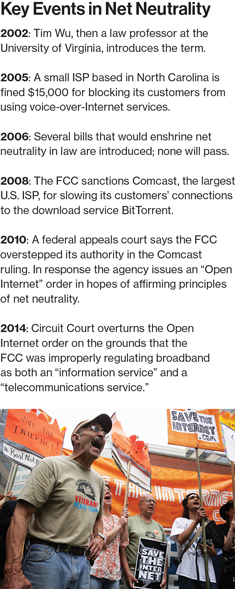

Tim Wu built on that idea in a 2002 article published when he was a law professor at the University of Virginia. In that and subsequent papers, he wrote that the end-to-end principle stimulated innovation because it made possible “a Darwinian competition among every conceivable use of the Internet so that only the best survive.” To promote that competition, he said, “network neutrality” would be necessary to eliminate bias for or against any particular application.

Wu acknowledged that this was a new concept, with “unavoidable vagueness” about the dividing line between allowable network-management decisions and impermissible bias. But he expressed hope that others would refine his idea and make it more precise.

That never happened. The line remains as blurry as ever, which is one reason the debate over net neutrality is so intense.

Barbara van Schewick, a leading Internet scholar at Stanford and a former member of Lessig’s research team, expresses concern that if profit-hungry companies are left unfettered to choose how to handle various types of traffic, they “will continue to change the internal structure of the Internet in ways that are good for them, but not necessarily for the rest of us.” She warns of the perils of letting Internet providers promote their own versions of popular services (such as Internet messaging or Internet telephony) while degrading or blocking customers’ ability to use independent services (such as WhatsApp in messaging or Skype in telephony). Such practices have occasionally popped up in Germany and other European markets, but they have rarely been seen in the United States, a disparity that van Schewick credits to the FCC’s explicit or implicit commitments to net neutrality.

Internet service providers such as AT&T have publicly insisted that they wouldn’t ever rig their networks to promote their own applications, because such obvious favoritism would cause customers to cancel service en masse. Skeptics counter that in many locales, consumers have little choice but to stick with their current broadband provider, because there is barely any competition.

Van Schewick also argues that it would be a mistake to let the likes of AT&T or Comcast charge independent content and service creators (including Internet telephony providers such as Skype or Vonage) to secure the best possible access to end users. Though such access fees exist in other industries—cereal and toothpaste companies, for example, pay “slotting fees” to major grocers in order to get optimal shelf space in stores—van Schewick warns that charging such fees to online companies would “make it more difficult for entrepreneurs to get outside funding.” In other recent writings, she has said it would be ill-advised to let carriers decide without input from customers whether to optimize different versions of their services for different types of traffic, such as video versus speech and text.

But while van Schewick and other advocates are trying to promote an “open Internet,” codifying too many overarching principles for the Internet makes many engineers uncomfortable. In their view, the network is a constant work in progress, requiring endless pragmatism. Its backbone is constantly being torn apart and rebuilt. The best means of connecting various networks with one another are always in flux.

“You can’t change congestion by passing net neutrality or doing that kind of thing,” says Tom Leighton, cofounder and chief executive of Akamai Technologies. His company has been speeding Internet traffic since the late 1990s, chiefly by providing more than 150,000 servers around the world that make it possible for content creators to store their most-demanded material as close to their various users as possible. It’s the kind of advance in network management that helped the Internet survive the huge increases in traffic over the last two decades. To keep traffic humming online, Leighton says, “you’re going to need technology.”

If some people want their Internet connections to deliver ultrahigh-resolution movies, they might be better served by flexible arrangements that eschew strict equity for all bits and instead prioritize video.

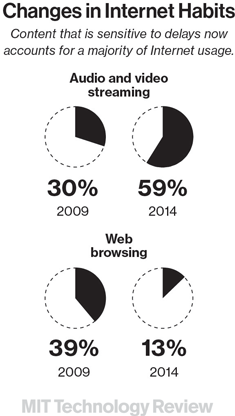

A central tenet of net neutrality is that “best efforts” should be applied equally when transmitting every packet moving through the Internet, regardless of who the sender, recipient, or carriers might be. But that principle merely freezes the setup of the Internet as it existed nearly a quarter-century ago, says Michael Katz, an economist at the University of California, Berkeley, who has worked for the FCC and consulted for Verizon. “You can say that every bit is a bit,” Katz adds, “but every bitstream isn’t the same bitstream.” Video and voice transmissions are highly vulnerable to errors, delays, and packet loss. Data transmissions can survive rougher handling. If some consumers want their Internet connections to deliver ultrahigh-resolution movies with perfect fidelity, those people would be better served, Katz argues, by more flexible arrangements that might indeed prioritize video. Efficiency might be more desirable than a strict adherence to equity for all bits.

House of Cards

About a year ago, Netflix’s customers noticed something disquieting when they tried to stream popular shows such as House of Cards. Their download speeds became annoyingly slow and some shows wouldn’t load at all, regardless of whether these customers relied on Time Warner Cable, Verizon, AT&T, or Comcast. Network congestion had taken hold—with transmission speeds dropping as much as 30 percent, according to Netflix’s own data. Last March, Netflix’s CEO, Reed Hastings, lashed out at the major U.S. Internet service providers, accusing them of constraining Netflix’s performance and pressuring his company to pay big interconnection fees.

Over the next few months, Netflix and its allies portrayed this slowdown as an example of cable companies’ most selfish behavior. In communications with the FCC, Netflix called for a “strong version” of net neutrality that would block the companies from charging fees to online service providers. In his blog, Hastings declared that net neutrality must be “defended and strengthened … to ensure the Internet remains humanity’s most important platform for progress.”

But the situation isn’t as black-and-white as Hastings’s indignant posts suggested.

For many years, high-volume sites run by Facebook, YouTube, Apple, and the like have been negotiating arrangements with many companies that ferry data to your Internet service provider—backbone operators, transit providers, and content delivery networks—to ensure that the most popular content is distributed as smoothly as possible. Often, this means paying a company such as Akamai to stash copies of highly in-demand content on multiple servers all over the world, so that a stampede for World Cup highlights creates as little strain as possible on the overall Internet.

There’s no standard way that these distribution arrangements are negotiated. Sometimes no money changes hands. In other situations, content companies pay for distribution. In theory, distribution companies could pay for content. In Netflix’s case, as demand has skyrocketed for its movies and TV shows, the company has negotiated a wide range of ways to help route its content around the Internet as efficiently as possible.

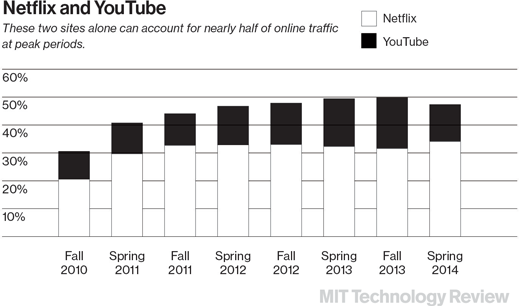

As Ars Technica reported earlier this year, Netflix started to realign its distribution methods in mid-2013. As its traffic soared, that created greater demands on all the Internet service providers that needed to handle House of Cards and its kin. By some estimates, Netflix last year was accounting for as much as one-third of all U.S. Internet traffic on Friday evenings. One of Netflix’s distribution allies (Level 3) restructured its terms with Comcast, reflecting the expenses associated with extra network connections, known as peering points, that Comcast needed to install in order to handle this rising traffic. Another (Cogent Communications) balked at the idea of defraying Comcast’s costs, and as a result, additional connections from Cogent to Comcast weren’t installed.

The result: Netflix’s videos began to stutter. In the short term, Netflix resolved the problem by paying for more of the peering points that carriers such as Comcast and Verizon required. More strategically, Netflix is arranging to put its servers in Internet service providers’ facilities, providing them with easier access to its content.

In the long run, carriers and content companies are likely to keep tussling about the ways they connect—simply because these are the sorts of business contracts that must be revisited as circumstances change. That’s why Hundt, FCC chairman from 1993 to 1997, says it’s a mistake to portray Netflix’s scuffle with the carriers as a critical test of the neutrality principle. It’s more like a routine business dispute, he says. “This is a battle between the rich and the wealthy,” he adds. “Both sides will have to figure out, on their own, how to get along.”

Hundt says the Netflix fight shouldn’t distract regulators who are trying to figure out the best way to keep the Internet open. They should be focusing, he says, on making sure that everyday customers are getting high-speed Internet as cheaply and reliably as possible, and that small-time publishers of Internet content can distribute their work. It’s worth noting that much of the lobbying in favor of net neutrality is coming from large, publicly traded companies that make momentary allusions to the well-being of garage-type startups but are mainly focused on disputes that apply to the Internet’s biggest players. A tiny video startup doesn’t generate enough volume to force Comcast to install extra peering points.

Zero Rating

In the rest of the world, where net neutrality is not insisted on, innovative approaches to wireless Internet pricing are catching on. At the top of the list is “zero rating,” in which consumers are allowed to try certain applications without incurring any bandwidth-usage charges. The app providers usually pay the wireless carriers to offer that access as a way of building up their market share in a hurry.

In much of Africa, people with limited usage plans can enjoy free access to Facebook or Wikipedia this way. In Europe, many music-streaming sites have hammered out arrangements with various wireless carriers in which zero-rating promotions become a major means of marketing. In China and South Korea, subsidized wireless options are springing up too. Such arrangements can help hold down mobile-phone bills and possibly even get people online for the first time.

Much of the lobbying in favor of net neutrality is coming from large, publicly traded companies that make momentary allusions to the well-being of garage-type startups.

In the United States, T-Mobile lets customers tap into a half-dozen music sites, such as Pandora and Spotify, without incurring usage charges. And AT&T has been experimenting with zero rating. But overall, things are moving slowly.

Consumers around the globe may find zero rating delightful, but net neutrality champions such as Jeremy Malcolm, senior global policy analyst at the Electronic Frontier Foundation, object on principle because it lets content providers pay carriers for access to consumers. In his view, carriers can’t be trusted in any situation that involves special deals for certain services.

When Tim Wu talked about net neutrality a decade ago, he framed it as a way of ensuring maximum competition on the Internet. But in the current debate, that rationale is in danger of being coöpted into a protectionist defense of the status quo. If there’s anything the Internet’s evolution has taught us, it’s that innovation comes rapidly, and in unexpected ways. We need a net neutrality strategy that prevents the big Internet service providers from abusing their power—but still allows them to optimize the Internet for the next wave of innovation and efficiency.

George Anders is a writer based in Northern California. He shared in the 1997 Pulitzer Prize given to the Wall Street Journal for national reporting.

This story was updated on October 15 to delete a reference to GreenByte. DataMi is still developing a service with that name even though it has put the variable-pricing aspect of it on hold.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.