CES 2014: Intel’s 3-D Camera Heads to Laptops and Tablets

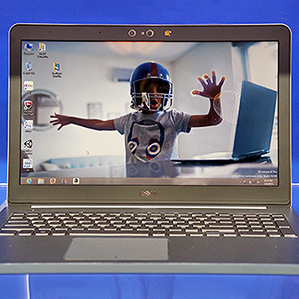

A combined 2-D and 3-D camera from Intel will be built into laptops and tablets from a range of manufacturers, the company announced at the International Consumer Electronics Show (CES) on Monday. The camera allows a device to be controlled with arm, hand, and finger gestures, and is also intended to allow software to capture and understand the world around it, including people’s facial expressions.

“We see and touch in 3-D, so why do we have to use computers in 2-D?” asked Mooly Eden, general manager of Intel’s Perceptual Computing Group, before unveiling the new depth-sensing technology, called the Intel RealSense 3-D camera.

He said that Intel intends the technology to become ubiquitous, similar to how conventional webcams have become a standard feature of even the cheapest PCs. “This is something that we will be able to embed in many, many systems to drive the cost down,” said Eden, brandishing a slim naked RealSense circuit board, approximately five inches long and as thin as two quarters.

Intel showed seven different laptops and tablets from Dell, Lenovo, and Asus with the integrated depth camera. Such devices are slated to hit the market in the second half of 2014.

Eden introduced several demonstrations of different applications for the new camera, including gaming, photography, and 3-D scanning. In one demonstration a person waved his hand in front of a Windows computer to move the cursor around, closing his hand to grab a virtual page, moving his hand to scroll the page, and tapping in space to perform the equivalent of a mouse click.

In another, the depth sensing enhanced the tablet’s camera app. While composing a photo, a few taps on the screen were enough to isolate the background and foreground, then apply color filters.

A similar function was demonstrated in a special version of Microsoft’s Skype software: the depth-camera isolated a video caller from his surroundings and set him against various backgrounds.

Intel further announced a partnership with 3-D Systems, which makes 3-D printers, to develop software so the depth-sensing cameras can scan objects and then edit or print a digital double. Several gesture-controlled games were also shown.

Eden said that enabling computers to sense in 3-D should eventually allow software to better understand human behavior: “The computer will be able to track with the 3-D camera exactly my facial expression and be able to respond appropriately.” Eden said that ability could be combined with voice-operated virtual assistant software so people could speak to their devices more naturally.

Like the depth-sensing technology for Microsoft’s Kinect gaming system, Intel’s has two cameras inside – a conventional camera, and one that senses infrared – as well as an infrared light. (The device can infer depth by detecting infrared light that has bounced back from a scene.)

But Intel’s technology will be much smaller than Microsoft’s, enabling what will be the first generation of laptops and tablets with depth-sensing technology built in. Microsoft has for several years offered a version of its Kinect camera aimed at desktop computers (see “Microsoft’s Plan to Bring About an Era of Gesture Control”), and other companies have launched depth-camera accessories (see “Depth Sensing Cameras Head to Mobile Devices”), but they have had minimal success and were not compact enough to be integrated into PCs or tablets.

PrimeSense, which developed hardware used in Microsoft’s Kinect, previously said it was working on a compact version of its technology (see “PC Makers Bet on Gaze, Gesture, Touch and Voice”). However, it hasn’t publically shown one small enough to be embedded into devices. PrimeSense was recently acquired by Apple, which is notoriously secretive about technology under development.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.