The Future of Photography: Cameras With Wings (or Rotors)

The best panoramic images need to be taken from relatively high vantage points. In recent years, that’s become easier thanks to remote control aircraft and helicopters that can hoist cameras aloft.

These machines have plummeted in price while their performance has sky-rocketed. Today, it’s possible to buy a decent quadcopter weighing 100 grams for just a few dollars and to fit it with a cheap camera that weighs less than 10 grams.

The problem, of course, is that the quality of images from these “keychain” cameras is poor. And carrying a better camera increases the costs dramatically. That’s because the quality of better cameras is generally the result of better lenses. These are made of glass and so heavy. And a heavier camera requires a more powerful and expensive helicopter.

That would seem to rule out the possibility of helicopter-based imaging as a mass-market photographic tool.

Not so, says Camille Goudeseune at the University of Illinois at Urbana-Champaign. Today, he explains how to make high-quality panoramic images using the cheapest cameras attached to the smallest and lightest remote-control helicopters. And that raises the prospect of an interesting future for photography—of pulling a camera out of your pocket and letting it fly away before pressing the shutter button.

So how does Goudeseune do it? The answer is by stitching together many poor quality images to make one high-quality panorama. That’s possible because of the advent of computer stitching algorithms that have automated this process.

So he starts with a cheap quadcopter weighing less than a hamster carrying an even cheaper camera weighing about as much as a hummingbird. These cameras have a low resolution, typically 640 x 480 pixels and cheap plastic lenses which limit their utility. On the other hand, they can take images at up to 60 frames per second and store up to an hour’s worth of footage on an 8 GB microSD card.

With this kind of set up taking a panorama sounds easy but in practice it is actually rather tricky. These algorithms start with the assumption that all the images were taken from the same spot. That’s not necessarily the case for pictures are taken from a quadcopter that can be buffeted by the lightest breeze.

But with care, good images are possible. Goudeseune says the trick is to yaw, or pirouette, the helicopter while it is hovering. This movement needs to be slow since the stitching is better when frames overlap. “Some drifting is tolerable if the subject is not very nearby,” he says.

Goudeseune also has some tips about how best to process the images, by removing duplicate frames, by tackling blockiness caused by the jpeg compression algorithm and by avoiding moire patterns caused by repeating patterns.

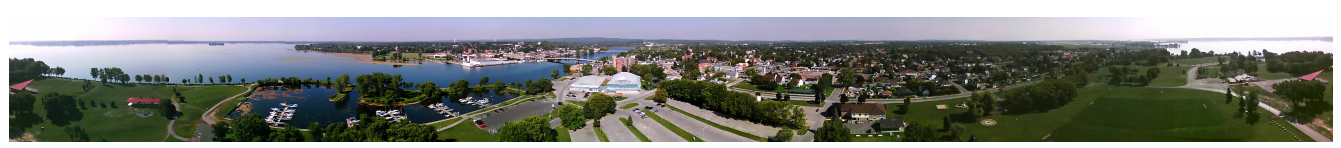

The image above shows how good Goudeseune’s technique can be. It’s a full 360 degree panorama of Trenton, Ontario, taken earlier this year. He says it was “captured in midmorning while waiting ten minutes for the beer store to open.” As the saying goes, the best camera is the one you have with you.

That’s an impressive result given the equipment involved. And it opens a new chapter for photography that until now has been the preserve of science fiction. Overhead images taken by unobtrusive remote-control helicopters.

And Goudeseune is leading the way. He says he once used a sub-100 gram quadcopter carrying an 8 gram camera to photograph a family wedding’s outdoor reception without anybody noticing. Of course, the thorny issue of privacy that this raises is another question.

One thing is for sure: photography is changing. It wasn’t long ago that a good photograph required experience and a camera bag full of specialist lenses, films and equipment. Now most people get by with the camera on their smartphone.

And it’s just possible that the next generation of photographers will think nothing of releasing their cameras to the skies, like doves to the wind.

Ref: arxiv.org/abs/1311.6500: Stitched Panoramas from Toy Airborne Video Cameras

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.