A Gestural Interface for Smart Watches

If just thinking about using a tiny touch screen on a smart watch has your fingers cramping up, researchers at the University of California at Berkeley and Davis may soon offer some relief: they’re developing a tiny chip that uses ultrasound waves to detect a slew of gestures in three dimensions. The chip could be implanted in wearable gadgets.

The technology, called Chirp, is slated to be spun out into its own company, Chirp Microsystems, to produce the chips and sell them to hardware manufacturers. They hope that Chirp will eventually be used in everything from helmet cams to smart watches—basically any electronic device you want to control but don’t have a convenient way to do so.

“There aren’t a whole lot of options of what you can do on a touch screen when it’s about the size of a quarter or so,” says Richard Przybyla, a graduate student at UC Berkeley’s Berkeley Sensor & Actuator Center, who designed the ultrasound chip.

Chirp is one of a growing number of efforts to bring gesture controls to all kinds of consumer electronics, like Microsoft’s Kinect and Leap Motion’s Leap Motion Controller. Some methods aim to make it easier to integrate gesture controls into gadgets like laptops and smartphones by using hardware already built into the device: Microsoft Research’s SoundWave project relies on your speaker and microphone, while Flutter, recently acquired by Google, uses your webcam.

But Chirp’s team believes that its technology, which requires building an electronics and an ultrasound chip into the device you want to control, allows for much more accurate gestures and lower power consumption—and can work in the dark or bright light—making it ideal for small electronics such as smart watches and head-mounted computers like Google Glass.

Chirp uses sonar via an array of ultrasound transducers—small acoustic resonators—that send ultrasonic pulses outward in a hemisphere, echoing off any objects in their path (your palm, for instance). Those echoes come back to the transducers, and the elapsed time is measured by a connected electronic chip. When using a two-dimensional array of transducers, the time measurements can be used to detect a range of hand gestures in three dimensions within a distance of about a meter.

Przybyla showed me a demo of Chirp at the lab he works out of at UC Berkeley, where the chips that comprise it were hooked up to a computer, allowing me to control a computer-animated plane’s flight path on a monitor by moving my hand in front of the display. Since the demo included a linear array of transducers, rather than a two-dimensional array, I was only able to check out Chirp in two dimensions (meaning I could control the plane’s side-to-side and forward-and-backward movements, but could not move it up and down). The group has built a chip with a two-dimensional array, but Przybyla says it’s still working to improve Chirp’s ability to track that up-and-down angle. It was noticeably easier to control on my first try than some other kinds of gesture-recognition technologies I’ve tried, and didn’t seem to require any calibration to sense most of my movements accurately.

Przybyla says the researchers behind Chirp envision determining a basic set of gesture commands that could be programmed into Chirp-enabled devices, like pulling your hand away from your smartphone’s screen in order to zoom in on a photo.

Since the system uses sound, which travels much slower than light, it can use low-speed electronics for sensing, which dramatically lowers the system’s overall power consumption, Przybyla says, allowing it to run off a watch battery continuously for 30 hours.

Chris Harrison, a founder and the chief technology officer of Qeexo, a company that makes new touch-screen interface technology, is impressed by the power-consumption claims of Chirp’s team. While there are potential drawbacks like figuring out when a user is trying to, say, open a message on a smart watch versus simply moving his hand near his smart watch, Harrison can imagine the utility of Chirp on such gadgets, whose itty-bitty displays can make them a pain to use.

“If you can move that interaction to the air around it, which is many times larger, it has the potential of alleviating that bottleneck,” he says.

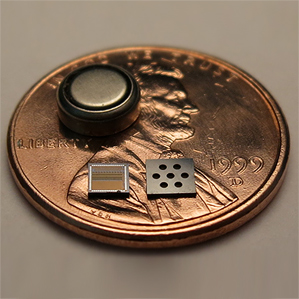

Right now, Chirp is just tracking hand motions, but may eventually try individual finger tracking, Przybyla says—a move that could enable better recognition, and perhaps a broader range of identifiable movements. The current chips the group is using are about five millimeters across; they can be made as small as one to two millimeters and still be able to track basic hand gestures.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.