Cryptographers Have an Ethics Problem

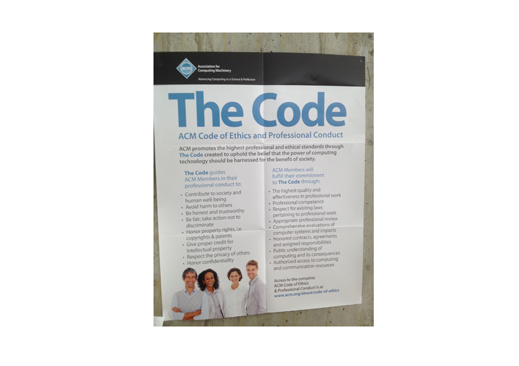

Last week, I visited the MIT computer science department looking for a very famous cryptographer. As I made my way through the warren of offices, I noticed a poster taped to the wall—the kind put up to inform or inspire students. It was the code of ethics of the Association for Computing Machinery, the world’s largest professional association of computer scientists.

The code is a list of 16 “moral imperatives.” Two items immediately jumped out:

1.3 - Be honest and trustworthy.

1.7 - Respect the privacy of others.

This got me thinking. It looks as if the code-breakers at the National Security Agency—and possibly the academics that often assist them—are in clear, dramatic breach of their own profession’s code of conduct.

At first, this may seem like small potatoes. According to secret documents leaked by a former contractor, Edward Snowden, the NSA has been gathering records of the communications of all Americans and has also defeated widely used encryption protocols so it can read them (see “NSA Surveillance Reflects a Broader Interpretation of the Patriot Act”).

If, as some charge, the NSA has broken laws and violated the U.S. constitution, then it has committed criminal acts far worse than trampling on a voluntary code of conduct. Alternatively, if you think the NSA’s snooping is justified to defend the country, then who cares about a pious-sounding ethics statement anyway?

But it does matter. The reason is that building an atomic bomb, or breaking the toughest cipher, is something only a few experts can manage. So experts face special moral dilemmas. How they resolve them has to do exactly with a group sense of ethics, the kind that’s embodied by codes of behavior or credos.

“I think this is really interesting,” says Bruce Schneier, a computer security expert who has had access to some of the Snowden documents and has been critical of the government. “NSA employees and their corporate collaborators are breaking that code of ethics.”

Actually, many academics are involved, too, if less directly. That is because the discipline of cryptography is highly militarized already. Today, the U.S. government spends $10.5 billion a year on signals intelligence and employs 30,000 people to do it, according to budget documents leaked by Snowden. University researchers frequently collaborate. They take sabbaticals with the NSA and get funding from the military to work on interesting problems. Many got their start on government projects.

Not every technical discipline faces similar pitfalls—and some have made different choices. Some leading robotics experts have told me they are totally opposed to helping build Terminator-type robots (that may be why we haven’t seen one yet). And in American biology laboratories, you’d be hard pressed to find anyone doing research aimed at harming another person, terrorist or not. It’s simply not on the agenda. (Part of the reason is that President Richard Nixon disclosed and ended the secret U.S. germ warfare program in 1969.)

Eugene Spafford, executive director of the CERIAS institute at Purdue University and an officer of the ACM, cautioned me against reaching simplistic ethical judgments. He said if a person is hacking computers and stealing messages to prevent a terrorist attack, they’re not necessarily in violation of the society’s code, which allows for “varying interpretations.”

Indeed, the code says “any ethical principle may conflict with other ethical principles in specific situations.” In other words, ethics are relative. This is the logic that says a soldier can kill in war, even though doing the same in peacetime is a crime.

And that may be why cryptographers see blurred ethical lines. Code-making and code-breaking have always been tied up with war and diplomacy. For instance, Alan Turing, the brilliant mathematician for whom the ACM’s top annual prize is named, was involved in helping to crack the Nazi enigma cipher during World War II, which allowed the Allies to read German communications.

Yet something important has changed since those days. Before, only diplomats and spies used coded messages. Today, all of us do. Every Gmail you send is encrypted as are Skype calls. That is why the NSA has apparently made sure that it can break that sort of cryptography (see “NSA Leak Leaves Crypto-Math Intact but Highlights Known Workarounds”). This breaking or defeating of public encryption is a new moral hazard into which the experts have fallen. Because they are now deploying technology to spy on all of us, they can’t so easily invoke national security as an ethical trump card.

Snowden, the NSA whistleblower who fled to Russia (where he remains) has explained how he made his own “moral decision to tell the public about spying that affects us all.” His public statement is worth reading. Snowden says that he chose to “transcend” obedience to the U.S. government in order to obey universal rules of human conduct.

I don’t know exactly what code of ethics Snowden follows. The ACM, out of respect for privacy, declined to tell me if he was a member.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.