Google and NASA Launch Quantum Computing AI Lab

Quantum computing took a giant leap forward on the world stage today as NASA and Google, in partnership with a consortium of universities, launched an initiative to investigate how the technology might lead to breakthroughs in artificial intelligence.

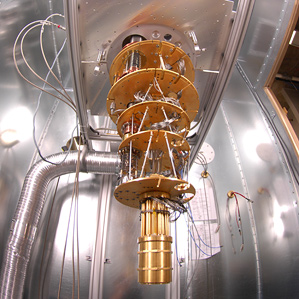

The new Quantum Artificial Intelligence Lab will employ what may be the most advanced commercially available quantum computer, the D-Wave Two, which a recent study confirmed was much faster than conventional machines at defeating specific problems (see “D-Wave’s Quantum Computer Goes to the Races, Wins”). The machine will be installed at the NASA Advanced Supercomputing Facility at the Ames Research Center in Silicon Valley and is expected to be available for government, industrial, and university research later this year.

Google believes quantum computing might help it improve its Web search and speech recognition technology. University researchers might use it to devise better models of disease and climate, among many other possibilities. As for NASA, “computers play a much bigger role within NASA missions than most people realize,” says quantum computing expert Colin Williams, director of business development and strategic partnerships at D-Wave. “Examples today include using supercomputers to model space weather, simulate planetary atmospheres, explore magnetohydrodynamics, mimic galactic collisions, simulate hypersonic vehicles, and analyze large amounts of mission data.”

Quantum computers exploit the bizarre quantum-mechanical properties of atoms and other building blocks of the cosmos. At itse very smallest scale, the universe becomes a fuzzy, surreal place—objects can seemingly exist in more than one place at once or spin in opposite directions at the same time.

While regular computers symbolize data in bits, 1s and 0s expressed by flicking tiny switch-like transistors on or off, quantum computers use quantum bits, or qubits, that can essentially be both on and off, enabling them to carry out two or more calculations simultaneously. In principle, quantum computers could prove extraordinarily much faster than normal computers for certain problems because they can run through every possible combination at once. In fact, a quantum computer with 300 qubits could run more calculations in an instant than there are atoms in the universe.

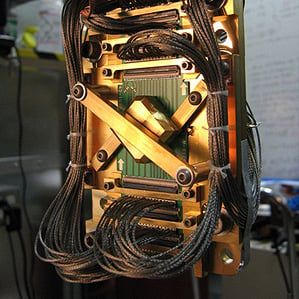

D-Wave, which bills itself as the first commercial quantum computer company, has backers that include Amazon.com founder Jeff Bezos and the CIA’s investment arm In-Q-Tel (see “The CIA and Jeff Bezos Bet on Quantum Computing”). It sold its first quantum computing system, the 128-qubit D-Wave One, to the military contractor Lockheed Martin in 2011. Earlier this year it upgraded that machine to a 512-qubit D-Wave Two—reputedly for about $15 million, which might be roughly what the new Quantum Artificial Intelligence Lab paid for its device.

The collaboration between NASA, Google, and the Universities Space Research Association (USRA) aims to use its computer to advance machine learning, a branch of artificial intelligence devoted to developing computers that can improve with experience. Machine learning is a matter of optimizing behavior that may be easier for quantum computers than conventional machines.

For instance, imagine trying to find the lowest point on a surface covered in hills and valleys. A classical computer might start at a random spot on the surface and look around for a lower spot to explore until it cannot walk downhill anymore. This approach can often get stuck in a local minimum, a valley that is not actually the very lowest point on the surface. On the other hand, quantum computing could make it possible to tunnel through a ridge to see if there is a lower valley beyond it.

“Looks like win-win-win to me—Google, NASA, and USRA bring unique skills and an interest in novel applications to the field,” says Seth Lloyd, a quantum-mechanical engineer at MIT. “In my opinion, the focus on factoring and code-breaking for quantum computers has overemphasized the quest for constructing a large-scale quantum computer, while slighting other potentially more useful and equally interesting applications. Quantum machine learning is an example of a smaller-scale application of quantum computing.”

Over the years, many critics have questioned whether D-Wave’s machines are actually quantum computers and whether they are any more powerful than conventional machines. The standard approach toward operating quantum computers, called the gate model, involves arranging qubits in circuits and making them interact with each other in a fixed sequence. In contrast, D-Wave starts off with a set of noninteracting qubits—a collection of supercomputing loops kept at their lowest energy state, called the ground state—and then slowly, or “adiabatically,” transforms this system into a set of qubits whose interactions at its ground state represent the correct answer for the specific problem the researchers programmed it to solve.

Many scientists have wondered whether the approach D-Wave used was vulnerable to disturbances that might keep qubits from working properly. But independent researchers recently found that D-Wave’s computers can actually solve certain problems up to 3,600 times faster than classical computers. Before choosing the D-Wave Two, NASA, Google, and USRA ran the computer past a series of benchmark and acceptance tests. It passed, in some cases by a giant margin.

USRA will invite researchers across the United States to use the machine. Twenty percent of its computing time will be open to the university community at no cost through a competitive selection process, while the rest of it will be split evenly between NASA and Google. “We’ll be having some of the best and brightest minds in the country working on applications that run on the D-Wave hardware,” Williams says.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Google DeepMind’s new generative model makes Super Mario–like games from scratch

Genie learns how to control games by watching hours and hours of video. It could help train next-gen robots too.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.