What Twitter Learns from All Those Tweets

Twitter messages might be limited to 140 characters each, but all those characters can add up. In fact, they add up to 12 terabytes of data every day.

“That would translate to four petabytes a year, if we weren’t growing,” said Kevin Weil, Twitter’s analytics lead, speaking at the Web 2.0 Expo in New York. Weil estimated that users would generate 450 gigabytes during his talk. “You guys generate a lot of data.”

This wealth of information seems overwhelming but Twitter believes it contains a lot of insights that could be useful to it as a business. For example, Weil said the company tracks when users shift from posting infrequently to becoming regular participants, and looks for features that might have influenced the change. The company has also determined that users who access the service from mobile devices typically become much more engaged with the site. Weil noted that this supports the push to offer Twitter applications for Android phones, iPhones, Blackberries, and iPads. And Weil said Twitter will be watching closely to see if the new design of its website increases engagement as much as the company hopes it will.

Credit: Phillie Casablanca

Of course, Twitter also tracks simple statistics, such as how many searches are being performed on its site and where users are located, as well as what domains users link to most frequently. But Weil says the company uses machine learning techniques to figure out what kinds of tweets resonate most with users (this is reposted, automatically, through its “TopTweets” account).

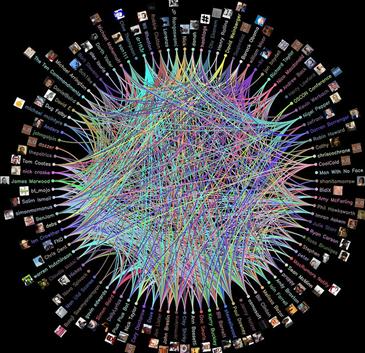

Twitter is also asking some more open-ended questions. Weil said the company is interested in what influences retweets (posts from one user that are reposted by another). And Twitter has discovered that it can make good guesses about the topics a user is interested in by looking at the users he follows that don’t follow him back.

Asking such specific questions of huge quantities of data is a common problem for successful Web companies. Weil explained that Twitter benefits from a variety of open-source software developed by companies such as Google, Yahoo, and Facebook. These tools are designed to deal with storing and processing data that’s too voluminous to manage on even the largest single machine.

Even so, Twitter sometimes struggles with not having enough hardware. Weil said the company has run out of space in its data center, and that the 100-machine cluster it currently uses to process data is significantly less powerful than what it really needs. Twitter plans to move to a new data center later this year, and he hopes to get three to four times the capacity there.

Weil also said that Twitter is interested in doing more real-time analysis of tweets, but he didn’t give details about how the company plans to mine this new trove of data.

Keep Reading

Most Popular

Large language models can do jaw-dropping things. But nobody knows exactly why.

And that's a problem. Figuring it out is one of the biggest scientific puzzles of our time and a crucial step towards controlling more powerful future models.

How scientists traced a mysterious covid case back to six toilets

When wastewater surveillance turns into a hunt for a single infected individual, the ethics get tricky.

The problem with plug-in hybrids? Their drivers.

Plug-in hybrids are often sold as a transition to EVs, but new data from Europe shows we’re still underestimating the emissions they produce.

Stay connected

Get the latest updates from

MIT Technology Review

Discover special offers, top stories, upcoming events, and more.